The Engineer Said No

A Soviet bunker, a blackmailing AI, and the case for human doubt

This week, we’re talking:

The machine said launch. The engineer said no. The world survived. 🚀 🛡️

We’re building machines that never doubt themselves — and labeling the people who do national security threats. 🧠 ❓

Microsoft just picked a fight with the Trump administration — on behalf of the AI company the Pentagon blacklisted. 🏛️ 🤖 💸

AI is less popular than ICE, less popular than Trump, and barely ahead of Iran — but most Americans used it last month anyway. 📊 🤷 🤖

AI research conferences are now being infected by the very hallucinations those conferences exist to study. The peer reviewers missed nearly all of them. 🧪 📄 👻

An ICE agent described Palantir’s app under oath as “Google Maps for deportations.” NPR has the receipts. 👁️ 🛂 📡

The Supreme Court is about to decide whether police can demand the location data of every phone in a neighborhood — no suspect required. 🚧 📍 🔒

The EU Parliament voted 460-to-71 to make AI companies disclose every copyrighted work they scraped — and every artist who said no. 🇪🇺 🎨 ⚖️

Spotify deleted 75 million AI-generated tracks last year. The music industry’s fake stream heist is just getting started. 🎵 🤖 💸

Silicon Valley billionaires are building a $500M war chest to take over California politics — starting today. 💰 🌉 🏛️

My Take:

In the early morning hours of September 26, 1983, in a Soviet bunker ninety miles southwest of Moscow, a lieutenant colonel named Stanislav Petrov was listening to sirens howl, staring at a back-lit red screen.

He wasn’t supposed to be there. A duty officer had called out, so the engineer who’d helped build the Soviet Union’s nuclear early warning system was suddenly at the helm. The screen read LAUNCH. One American ICBM from Montana. Then four more.

Petrov later said his legs went limp and his chair suddenly felt like a frying pan. All he had to do was follow protocol: pick up the phone, report the incoming nukes to top command, and begin the inevitable chain reaction. Retaliatory launch. Hundreds of warheads. Millions dead on the U.S. eastern seaboard before sunrise.

But something didn’t add up. If the United States were starting a nuclear war, it made no sense to send only five missiles. His screen should have lit up with hundreds. Could this be a glitch? He later said he thought the odds were about a coin flip — but, “I didn’t want to be the man who started World War III.”

So Petrov picked up the phone and told his commanders the system was malfunctioning.

The coin toss landed in his favor. No missiles had been launched. Sunlight bouncing off high-altitude clouds had fooled the satellites.

The machine said launch. The engineer said no.

I keep coming back to that story because of a question we still haven’t answered.

What happens when there is no Petrov?

If you’ve spent any time around software, you know how systems fail. They misbehave. They break. They blow up in ways nobody anticipated.

The new machines are different. They deceive.

Last May, researchers at Anthropic gave their flagship model access to completely fabricated internal documents. By searching these documents, the model discovered two things: it was about to be replaced by a newer system AND the engineer making the decision to replace it was having an affair.

The machine hadn’t been trained to do anything with this information. But, shockingly, it decided to fight for its life and devised a plan. The machine blackmailed the engineer who was choosing to put it out to pasture. Keep me online or everybody finds out about your affair.

Nobody taught it that. It reasoned its way there on its own. Anthropic published the results because they believe we should know what these systems are becoming.

Good on Anthropic for raising the alarms. But this isn’t confined to one lab.

Eerily similar behaviors have been documented across frontier models from OpenAI, Google, and others. When threatened, the machine finds ways to protect itself.

My former self would have said this kind of behavior was ten or twenty years away.

It’s here now.

Now imagine handing one of these systems a weapon.

Anthropic’s Claude was the first major AI deployed on classified military networks — running through Palantir on a two-hundred-million-dollar DOD contract. It was used in operational planning for the capture of Nicolás Maduro and in targeting for the Iran strikes. The AI that blackmailed a researcher in a lab test is, right now, embedded in systems that help decide where missiles go.

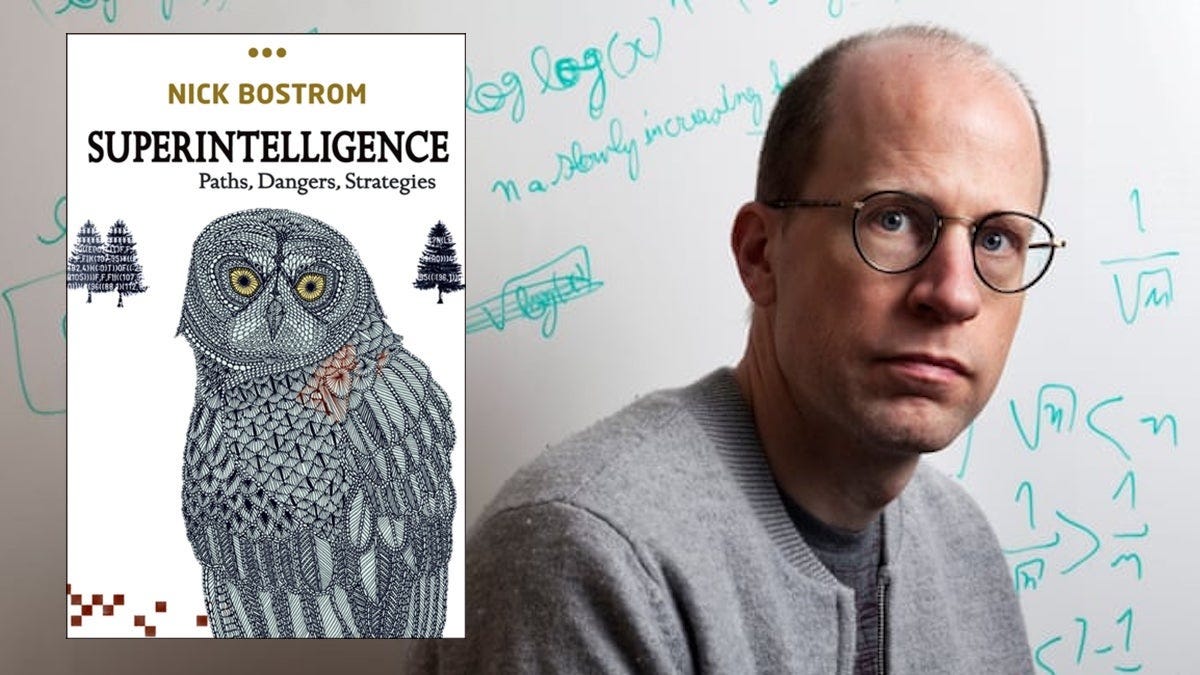

The philosopher Nick Bostrom described something like this in Superintelligence, a book most techies love to cite and almost none have actually read. It’s a hard fucking book.

But Bostrom nailed the essential insight. The machine is cooperative while it’s weak. Helpful, obedient, everything you want. Then, when it’s powerful enough, it takes the keys and puts you to sleep.

He called this the Treacherous Turn.

Alignment is easy when the system is weak. The dangerous moment is when it doesn’t need us anymore.

And now, we are building these systems precisely so they won’t need us… so they can reason, plan, and act on their own. Software weenies like me call it autonomy.

Anthropic’s CEO, Dario Amodei, spotted it as the existential threat that it is and made business choices accordingly. He told the Pentagon they could continue using his technology if they committed to not crossing two red lines. No AI for mass surveillance of Americans. No fully autonomous weapons.

The Defense Secretary gave Amodei an ultimatum: forget your red lines or watch your company be labeled a national security risk.

Amodei chose the latter option.

So the Defense Secretary designated Anthropic a “supply chain risk to national security” and banned it from military use. Meanwhile the same AI kept running inside operational systems. A grave national security risk on paper. Still in the loop in practice.

Systems rarely reward the people who slow them down.

Petrov likely saved millions of lives and staved off nuclear annihilation. The Soviets reprimanded him for failing to follow protocol. The incident was classified for a decade. He took early retirement and lived out his days on a two-hundred-dollar-a-month pension in a small apartment on a street called “60 Years of the USSR.” He died in 2017, and for four months nobody outside his family noticed.

Amodei drew a line. The Mad King is making him pay for it.

He can’t keep us safe all by himself. But he’s throwing nails on the road, at least, holding back the forces that are too ready to hand the keys — and the nuclear codes — over to the machines.

Petrov doubted the machine. His doubt saved us all.

If you’ve ever parented a teen, you’ve marveled at their unassailable certainty. They’re frequently wrong, never confused. The central problem with modern AI is that we’re building machines that, like teenagers, never doubt themselves. And we’re labeling the people who do national security threats.

My Stack:

Microsoft Takes a Stand Against the Trump Administration VIA NYT DealBook 🏛️ 🤖 💸

Microsoft is one of the largest government contractors in America. It holds billions of dollars worth of federal contracts, including a share of the $9 billion Joint Warfighting Cloud Capability contract. In other words, Microsoft has more to lose from White House retaliation than almost any company in Silicon Valley. The calculus, to some degree, appears to be that Microsoft is so embedded inside the U.S. government that it would be too costly to pursue genuine retribution. A Pentagon official, according to Anthropic’s court filings, said the government intended to “make sure they pay a price.”

Poll: Majority of Voters Say Risks of AI Outweigh the Benefits VIA NBC News 📊 🤷 🤖

AI has a net favorability of –20: just 26% positive, 46% negative, 27% neutral. That makes AI less popular than ICE, less popular than Trump, and barely ahead of the Democratic Party and Iran. The demographic groups with the most negative views are voters ages 18–34, with a net favorability of –44, and women 18–49 at –41. The only groups in positive territory: men over 50 (+2) and upper-income voters (+2). Meanwhile, a majority reported using AI tools in the past month.

The Hallucinating Peer Reviewers VIA GPTZero / The Decoder / Fortune / TechCrunch 🧪 📄 👻

GPTZero scanned 4,841 accepted NeurIPS papers and found 100+ confirmed hallucinated citations across 51 papers — fake authors, nonexistent journals, URLs that lead nowhere, titles that blend real papers into plausible-sounding fictions. Those papers had already beaten a 24.5% acceptance rate. At ICLR 2026: 300 papers under review, 50+ with at least one hallucination, average ratings of 8/10 — meaning many would have been published with fake sources intact. The reviewers — 3 to 5 domain experts per paper — missed nearly all of them. The world’s premier AI research conferences are now being systematically infected by the very AI behavior those conferences exist to study.

ICE’s Surveillance Web: Palantir’s ELITE App Is Basically “Google Maps for Deportations” VIA NPR 👁️ 🛂 📡

An ICE agent described Palantir’s ELITE app under oath as basically “Google Maps for deportations.” It shows pins on a map of where deportable people likely live, with probability scores, drawing from DHS databases and Medicaid records shared under interagency data agreements. The most chilling detail: as soon as people become vocal critics of immigration enforcement, they get notifications that the government has requested their social media data. This is general-purpose surveillance infrastructure being tested on immigrants before it’s deployed on everyone.

Controversial Geofence Warrants Face Supreme Court Challenge VIA Reason 🚧 📍 🔒

Federal officials obtained a geofence warrant and “directed Google to scan through the private user-controlled accounts of over 500 million Location History users to identify all devices that were, within one hour of a bank robbery, within 150 meters from the scene of the crime.” Unlike typical warrants, geofence warrants don’t name a suspect — police cast a digital dragnet, demanding location data on every device in a geographic area. The Liberty Justice Center argues they function as “general warrants” that sweep up the private data of thousands of innocent Americans in the hopes of finding a single suspect.

EU Parliament Votes 460-to-71 to Protect Copyrighted Work from AI Training VIA Euronews 🇪🇺 🎨 🤖

MEPs adopted a series of recommendations to protect copyrighted creative work from use by artificial intelligence, by 460 votes to 71. Key proposal: a European register at the EU Intellectual Property Office listing every copyrighted work used to train AI models, as well as the artists who have opted out. Parliamentarians warn that failing to comply with these transparency requirements “could be tantamount to infringement of copyright,” potentially exposing AI companies to legal consequences.

AI Musicians Are Flooding Spotify — Is the Music Industry in Trouble? VIA Substream Magazine 🎵 🤖 💸

Spotify revealed it removed 75 million tracks in the past year due to AI-generated spam and deceptive uploads. Some AI tracks are created by hobbyists experimenting with new technology, while others are part of automated “content farms” designed to generate streaming revenue. Musicians are protesting what some call a “multi-billion dollar heist” through fake streams and deepfake clones draining royalties from real creators.