What The Man Who Coined AI Knew That We Keep Forgetting

John McCarthy saw the wall that recursive self-improvement can't climb. Software is hitting it now.

This week, we’re talking:

The founder of AI posed a puzzle to Stanford’s best students. Their answer was elegant… and completely wrong. That same mistake is now playing out at massive scale. 🚁 🧠

Claude can theoretically rewrite Salesforce in a month. But AI-generated code has 1.7x more errors and review times are up 93%. We don’t have a code problem. We have a trust problem. 🤖 🐛 🛡️

Anthropic told the Pentagon to kick rocks over mass surveillance and autonomous weapons. The Pentagon blacklisted them. OpenAI rushed in to fill the void. If you haven’t been paying attention, here’s a tl;dr in under two minutes. 🤖 🛡️ ⚔️

Jack Dorsey cut half of Block and the stock jumped 24% — but another number jumped by nearly 20% too: the share of American workers afraid of losing their job to AI. That number has less press, but I think, more consequence. 🧱 📉 🤔

Three companies captured 83% of all global venture capital last month. Three. 💰 💰 💰

The Supreme Court just slammed the door on AI authorship. No human, no copyright. Period. 🤖 🚫 ©️

Geofence warrants hit the Supreme Court and Google says it’s objected to more than 3,000 of them 📍 ⚖️ 🔍

China’s new five-year plan mentions AI over 50 times. Gizmodo’s headline: “More AI, Less US.” 🇨🇳 🤖 🇺🇸

78 chatbot bills are alive in 27 states. Congress has passed zero. 📜 🏛️ 🫠

My Take:

The Trick

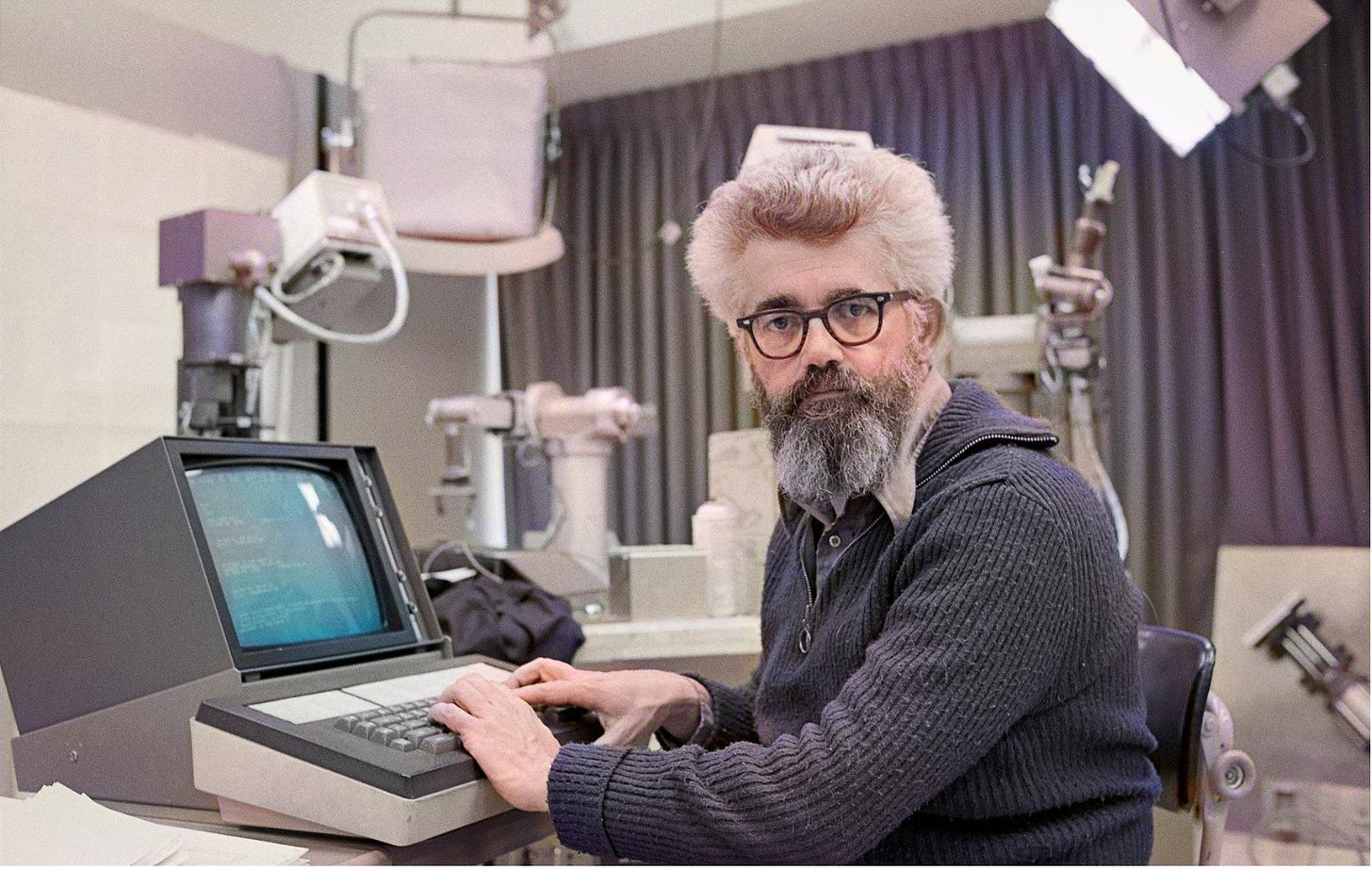

John McCarthy, the early founder of “artificial intelligence,” loved to play a trick on his students at Stanford.

He’d pose a puzzle. Twenty missionaries are stranded on one side of a river. They’re under attack. There’s a boat, but it only has five seats. How do you get them all across in time to save them?

The computer scientists would light up. Combinatorics. Optimization. Trip sequencing. They’d churn through the logic, and McCarthy would let them crank. He’d stand there, arms folded, watching them solve the posed puzzle with increasing elegance. Students would begin presenting their findings, and at some point, the Professor would interrupt.

“Tell me something,” he’d begin. “Why didn’t you guys use the helicopter?”

The world’s leading computer scientists-in-the-making would throw their hands in the air. “You didn’t tell us there was a helicopter!”

McCarthy, with a twinkle in his eye, would reply, “Yep. That’s the point.”

He called this the Frame Problem. Machines only know what you tell ‘em. If you present a problem to students and don’t mention a helicopter, they won’t consider using it. If you don’t put the helicopter in the model, the model will never know it exists. No amount of optimization over the variables you did include will conjure the ones you left out. McCarthy identified this in the late 1960s. It haunted AI research for decades. And now, as we barrel toward what everyone assures us is the threshold of Artificial General Intelligence, the Frame Problem is back.

The Moment Everyone Missed

We can see this in the recent WEF interview between Anthropic CEO Dario Amodei and Demis Hassabis. They covered a lot of ground in this interview so you’d be forgiven for missing the almost throwaway moment that I believe was the most immediately consequential. Hassabis conceded that recursive self-improvement (AI systems building the next generation of AI) might not be enough to close the gap. That we’ll need World Models to get there.

That’s the point everyone should be chewing on. And McCarthy told us why sixty years ago.

Recursive self-improvement can only improve upon what the system already sees. If the model is chewing on the same data, recutting it, reprocessing it, feeding it back to itself, there’s no leap. There’s no new context entering the frame. It’s missionaries and a boat, over and over, forever. The helicopter never appears.

Today we don’t call it the Frame Problem. We call it the context window. But the constraint is identical: the machines only know what you tell them.

We Don’t Have a Code Shortage. We Have a Trust Shortage.

So let’s talk about what this actually looks like in practice.

Claude Opus can write roughly 350 lines of production code per minute. Run it 24/7 for 30 days and the compute costs about $40K. That’s theoretically enough to rewrite the entire Salesforce ecosystem from scratch.

So why does Anthropic, the company that built Claude, still have 100 open software engineering positions? Why do they still pay millions to Salesforce for a CRM?

Because writing code is trivial now. Testing it, trusting it, deploying it. That’s the chokepoint. And it’s not going away.

AI-generated code produces 1.7 times more errors than human-written code, with 1.4 times more critical bugs. Review times are up 93%. TechCrunch put it bluntly: vibe coding has turned senior devs into “AI babysitters.”

The revolution is stalled. Not because we lack code. Because we lack trust.

The most experienced engineer at the most sophisticated company in the world hits the same ceiling the moment they hand work to a coding agent. Because the agent is blind.

This is the helicopter problem, playing out in production, at huge scale.

The Context Void

The coding agents generating all this output can see the code, sure. But they can’t see user behavior. Cloud configurations. Third-party integrations. The complex state of a live database. The environment in which the code runs. The stuff that breaks when chaos monkeys – real users like you and me – show up. Anyone who’s worked in software engineering has heard zealous engineers tell them, “But it worked on my machine!” And the answer has always been: “Very happy for you. And what’s your point?”

The AI can’t infer or reason about what it can’t see. At Checksum, we call this the Context Void.

The existing tools each look at one piece of the picture and call it done. PR review tools read code but don’t run it. AI testing platforms are closed systems with tests too high-level to build real confidence. Production monitoring only catches issues after launch. That’s like certifying a car is safe because the seat belt works. Great. What about the brakes? The sensors that detect walls and pedestrians? The pillars holding up the roof? The airbags? You can’t à la carte your way to quality. Seat belts are nice. But I want to know the whole car works.

What Self-Driving Cars Already Know

Which brings me to cars. Actual cars.

We don’t teach self-driving cars to drive by letting them hit pedestrians and “learning” from the mistake. We build what’s now called a World Model, a hyper-realistic simulation where the car practices millions of scenarios before it ever touches a public road. The model captures the laws of physics for objects moving on a road and the phenomena that accompany them. It knows there’s a thing called a pedestrian. A thing called a scooter. A thing called another car.

Remember the Uber autonomous vehicle that killed a woman in Tempe, Arizona? The system had a concept for bicycle, another concept for a pedestrian, but it couldn’t identify a pedestrian pushing a bicycle. It didn’t have a concept of pedestrian carrying a bike so it acted as if such a thing didn’t exist.

The helicopter, all over again.

Software needs the same architecture. We need what I call a Code World Model: a digital twin of a runtime software system. Not just the code, but the code in motion. The users, the cloud servers, the configs, the database, the services, the third-party integrations. A system that understands the “laws of physics” for a runtime environment. One that understands a specific code change might work in a vacuum but will crumble when hit with real-world network latency.

Let’s call this Software Intelligence. It’s the layer that’s missing from the AI stack. The ability to understand, verify, and autonomously improve software systems at runtime. Not just read code, but comprehend software in motion.

What We’re Building at Checksum

I know I sound like a proud founder here. (Guilty as charged.) But the conception was always this: We’re not sprinkling a little AI on an existing workflow to make it marginally better. We’re working to reconstitute, to redefine, what software quality actually means.

We need to stand up the concept of a shopping cart. A database. A workflow. A user session. The full runtime world where code meets the real world.

To fuel the Code World Model, we’ve assembled fifty terabytes of annotated, proprietary runtime data. User sessions. Screen recordings. Network traces. SDLC history. Pull requests. CI data. Browser action tuples. By training on how real users interact with software, and how systems actually fail in production, our model learns to identify impossible states and brittle logic that a standard LLM, looking only at code, would never perceive.

What makes this a flywheel: our enterprise customers pay us for continuous quality (E2E testing, CI guardrails, production monitoring), and in the process they generate the proprietary context data that fuels the Code World Model. Every new customer makes the model smarter. That data took years to accumulate; you can’t buy it, nor can you ingest or scrape it from the internet.

The LLM companies build general intelligence. We build the domain-specific world model for software. Different problem.

And here’s where the lizard eats its tail: we’re teaching a software system to learn about software systems. AI is still software. The Code World Model is software reasoning about the physics of software. Some of the philosophical questions about AGI and recursive self-improvement start to feel less abstract when you look at it that way. Maybe the path forward isn’t recursion at all. Maybe it’s context.

Continuous Quality

First there was CI/CD. Now there’s Continuous Quality. Always on. Agents that detect and fix failures without human intervention. Auto-recovery. Auto-healing. Rooted in 50TB of real-world evidence, not synthetic benchmarks. Zero flakiness. Thousands of tests that smoke out bugs in large production systems before they reach a user’s screen.

Amodei and Hassabis are right to chase AGI. But the Context Void is the crisis staring us in the face right now. While others focus on making AI write code faster, we’re building the world model to make sure code actually works in the wild. Before bugs happen. Before the users find them. Before anybody dies in Tempe.

McCarthy knew the answer sixty years ago. You have to tell the machine about the helicopter.

Or better yet, build a machine that already knows it’s there.

My Stack:

The Anthropic-Pentagon Saga Is Unprecedented in US History (Here Are the CliffsNotes in Under Two Minutes)

The DOD wanted unfettered access to Claude for “all lawful purposes.” Anthropic drew two red lines: no mass surveillance of American citizens, no autonomous weapons. The Pentagon said no company gets to “insert itself into the chain of command.” The Pentagon gave them a deadline to ditch their red lines but Anthropic did not relent. Trump blacklisted them. The administration designated Anthropic a “supply chain risk,” a label that has only ever been used against foreign adversaries. Anthropic is the first American company to receive it. Treasury, State, and HHS all told employees to stop using Claude. OpenAI swooped in within hours and signed essentially the same deal that Anthropic turned down, with slight cosmetic adjustments. ChatGPT uninstalls spiked 295% over the weekend. Claude overtook ChatGPT in the App Store by Saturday. Altman later conceded it “looked opportunistic and sloppy.” On the other side of the coin, defense tech companies started fleeing Claude overnight. Lockheed Martin is expected to pull Anthropic from its supply chain entirely. Oh, and the Pentagon is already using Claude in Iran. The military is actively deploying the AI tool it just branded a national security risk. Lawfare called the legal basis for deeming Anthropic a supply chain risk DOA: the designation “is not an exercise of the authority Congress granted” and the problems are “so glaring the administration may know this won’t survive judicial review.” The Center for American Progress is now calling on Congress to investigate and pass legislation protecting citizens from AI-enabled mass surveillance before the next contract negotiation decides it for us. El fin… for now.

Sources: PBS News, Axios, CBS News, NBC News, OpenAI Blog, Fortune, TechCrunch, CNBC, MIT Technology Review, Washington Post, Lawfare, Center for American Progress

AI Jobs: The Bill Comes Due

Jack Dorsey cut half of Block (~4,000 employees) and Block’s stock jumped 24%. Oracle announced plans to slash up to 30,000 jobs to fund AI data centers. Wall Street loved that too. Meanwhile, employee fear of losing their job to AI has jumped from 28% to 40% in just two years, and a viral essay comparing this moment to February 2020 kicked off what traders are calling the “AI scare trade.” Here’s the question nobody’s asking: will humans living in perpetual fear of losing their livelihood really perform at their best while building and deploying the most consequential technology in a thousand years? The market is rewarding the cut. I think that’s shortsighted.

Sources: CNN, Bloomberg, Fortune

Three companies captured 83% of all global venture capital last month.

OpenAI ($110B), Anthropic ($30B), and Waymo ($16B). That’s $156 billion. In one month. For context, that’s roughly a third of all venture dollars deployed in all of 2025. Whatever you think about the AI bubble question, this is concentration like we’ve never seen.

Sources: TechCrunch

The first geofence warrant case just hit the Supreme Court.

The ACLU, EFF, and Georgetown’s Center on Privacy & Technology filed briefs arguing geofence warrants are unconstitutional general warrants, full stop. Google filed its own brief calling them unconstitutional and revealed it has objected to more than 3,000 geofence warrants on constitutional grounds. This one could reshape digital surveillance law for a generation.

Sources: ACLU

The Supreme Court just slammed the door on AI authorship.

SCOTUS denied cert in Thaler v. Perlmutter, ending the last legal path to copyright protection for purely AI-generated work. The message is now consistent across every level of the American court system: if you want IP protection, a human has to be in the loop. Period.

Sources: Holland & Knight, Baker Donelson

China’s new five-year plan mentions AI over 50 times.

Gizmodo’s headline nailed it: “More AI, Less US.” The blueprint includes an “AI+ action plan” embedding the technology across every sector of the economy, plus hyper-scale computing clusters, humanoid robotics, 6G, brain-machine interfaces, and nuclear fusion. The state planning body claims China now leads the world in AI R&D. Whether that’s true or not, they clearly believe the race is theirs to win.

Sources: Gizmodo, The Quantum Insider

78 chatbot bills are now alive in 27 states. Congress hasn’t passed a single one.

Oregon just approved a chatbot safety bill. Vermont passed a law on AI in election materials. New York introduced a kids chatbot safety bill banning features considered unsafe for minors. Six weeks into the 2026 legislative session and the states are doing what the federal government won’t.

Sources: Transparency Coalition